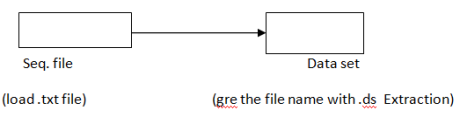

To overcome the limitations of sequential file, we use Data set

(That is Transformation jobs are dependent on extraction jobs. And loading jobs are dependent on Transformation jobs ).

Virtual :- Data moving through the link is virtual, (temporary) Persistent :- Data created with Data set is persistent, (permanent) In target file, C: /data/output. DS àpersistent àpermanent

Data set is not a single file, but has multiple files

Schema details and address of data à Structure () table definition C:/data/file. ds

Contains data in Native format C:/IBM/Information Server / Server/data set/ file. Ds

Resides in operating system.

Extraction:

Transformation  Here are properties Paste the Extraction Target path

Here are properties Paste the Extraction Target path![]() Columns àLoad

Columns àLoad

To view Data set outside the job

Dataset utilities

Tools ![]() Data set Management

Data set Management ![]() Shows the list of files

Shows the list of files ![]() Select the file

Select the file ![]() ok

ok ![]() Data set Management window opens

Data set Management window opens ![]() Here we can view data, copy data, delete data. Data Set version

Here we can view data, copy data, delete data. Data Set version

Step 1: Select the job properties Symbol

Step 1: Select the job properties Symbol ![]() parameters

parameters ![]() Add Environmental variable

Add Environmental variable ![]() Select APT – WPITE –DS-VERSION –ok

Select APT – WPITE –DS-VERSION –ok![]() Now Compile and Run

Now Compile and Run ![]() While RUN, We can Select the version Data set operators It does not have an operator generally but uses copy operator To see the operators of each stage : Job properties

While RUN, We can Select the version Data set operators It does not have an operator generally but uses copy operator To see the operators of each stage : Job properties ![]() Generated ASH How to flush Data in the Dataset ? File set :- (.fs extension) File set is a file stage, which is used for staging the data when we design jobs.

Generated ASH How to flush Data in the Dataset ? File set :- (.fs extension) File set is a file stage, which is used for staging the data when we design jobs. ![]() Similarities between Dataset and file set

Similarities between Dataset and file set

Differences D.S

F.S

Internal use Data that is created with Dataset can be used only for internal use. That is, .ds format is the only w. r. t Dataset ![]() file set Creation is same as Dataset creations Sequential Stage Target properties

file set Creation is same as Dataset creations Sequential Stage Target properties  Target properties File =? File update mode:

Target properties File =? File update mode:

Create the file, if the target file does not exit Options Clean up on failure = True First line is column name = False Reject mode = Continue Step 1 Select the job properties Symbol ![]() parameters

parameters ![]() Add Environmental variable

Add Environmental variable ![]() Select APT – CLOBER – OUTPUT

Select APT – CLOBER – OUTPUT ![]() Now compile and Full

Now compile and Full ![]() At Run time APT – CLOBER – OUTPUT = False

At Run time APT – CLOBER – OUTPUT = False ![]() Aborts APT – CLOBER – OUTPUT = True

Aborts APT – CLOBER – OUTPUT = True ![]() create file Clean up on failure :- (Works with Append mode) If true = automatically clears partially loaded records, if the job is failed for any restart from the point where it has drooped. Development and Debug Stage Divided into three groups

create file Clean up on failure :- (Works with Append mode) If true = automatically clears partially loaded records, if the job is failed for any restart from the point where it has drooped. Development and Debug Stage Divided into three groups

Row generator It is a development Stage, Which generates Sample data (sys/user defined) And Supports only one output. Column generator It is a development Stage, Which generates columns with Sample data (sys/user defined) And Supports input and output.

![]() Development Stage creates Sample data, Suppose if the Client does not give data, we create the Structure and sample data. Row generator :- (one input)

Development Stage creates Sample data, Suppose if the Client does not give data, we create the Structure and sample data. Row generator :- (one input) ![]() System Generated

System Generated  Right click on Row generator

Right click on Row generator ![]() properties

properties ![]() No. of Records = 100

No. of Records = 100 ![]() okàcolumn

okàcolumn ![]() load

load ![]() Select the file

Select the file ![]() ok

ok ![]() view data User-defined Properties

view data User-defined Properties ![]() No. of Records

No. of Records ![]() columns

columns ![]() load

load ![]() click on Serial fileàEdit column Meta data window opens

click on Serial fileàEdit column Meta data window opens ![]() Generator

Generator ![]() type (cycle / random)

type (cycle / random)

Var char Generator Algorithm = cycle Value = ? (Here values takes n, no. of name) Algorithm = Alphabet String = ? (String data only 1 name, and display each Alphabet ) That is string = abc Output is : a b c Integer

Desired to gain proficiency on DataStage? Explore the blog post on DataStage training to become a pro in DataStage.

Type = Random ![]() limit seed signed Data

limit seed signed Data

Column Generator :- (only 1 input /output)  Column Generator is associated with

Column Generator is associated with

Based on this we can group all Employee into 1 group Right click on column Generator

Properties

With in options

Column method = Explicit

Column to Generator = COMPANEY

Column to Generator = COUNTRY

Click, on output options

Left hold the mouse, and drag it and perform Mapping

Ok View data [Mapping is needed for column Generator, because it has both input and output] User-defined C.G

Properties

Output

Columns

Double click on country

Generator

Algorithm = cycle

Value = IBM

Ok

You liked the article?

Like: 0

Vote for difficulty

Current difficulty (Avg): Medium

TekSlate is the best online training provider in delivering world-class IT skills to individuals and corporates from all parts of the globe. We are proven experts in accumulating every need of an IT skills upgrade aspirant and have delivered excellent services. We aim to bring you all the essentials to learn and master new technologies in the market with our articles, blogs, and videos. Build your career success with us, enhancing most in-demand skills in the market.