It is Controlling the order of execution.

Master Job

Can run multiple jobs with the same name

Trigger

Checking the compilation of job 1, if it is compiled without errors, then job 2 is compiled.

Error handling

This situation arises when Job is Aborted

Notification

When everything is successful, we should get a confirmation mail that it is Successful.

Wait for file

Waits for the file, until it is searched and gets loaded into the source.

Checkpoints

If a job is aborted in between by any chance, check point make sure that the job starts from the point where it has failed.

Parameter Mapping

We cannot use a parameter defined in 1 job to another job, TO do this we use parameter Mapping.

Job sequencing Stages are categorized into 4 groups

New ![]() Select Sequence job

Select Sequence job![]() click on view

click on view ![]() click on palette option

click on palette option ![]() palette opens

palette opens

Designing a Master Job

To run a set of jobs in specific sequence ![]() click view

click view ![]() Repository

Repository ![]() Select Jobs

Select Jobs ![]() Drag and Drop Required jobs.

Drag and Drop Required jobs.

Drag the Job Activity from the palette ![]() Right click

Right click ![]() Browse the job

Browse the job

Job Activity

Supports multiple outputs but only 1 i/p

Trigger

Sequencer

Multiple inputs and multiple outputs

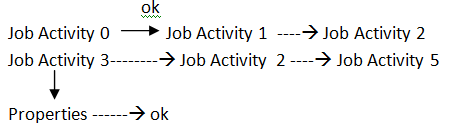

![]() here we are executing Trigger Stage that is job finished with no errors/ Warnings.

here we are executing Trigger Stage that is job finished with no errors/ Warnings.

Error handling

If job aborts, we use Terminator

Master page

Interested in mastering DataStage Training? Enroll now for a FREE demo on DataStage Training.

Exception handling ![]() Notification Activity

Notification Activity ![]() Terminator

Terminator

If Server is failed ![]() Exception handling

Exception handling

Automatically handle Activities that fail ![]() If this is enabled, then only exceptions will perform and continues the job

If this is enabled, then only exceptions will perform and continues the job

Notification Activity

Job finishes – mail has to be delivered

Aborts – mail

Server down – mail

Wait for file Activity

Properties ![]() browse the record file

browse the record file![]() wait for file to appear do not timeout

wait for file to appear do not timeout

Check points

Job properties

Add checkpoints to sequence is restartable on failure

Click on Job Activity

We can see the option![]() Do not check point run

Do not check point run

Parameter Mapping

Jobs ![]() Insert parameter

Insert parameter ![]() Job properties

Job properties ![]() Add parameters

Add parameters

Oracle oracle parameter parameter set

D no deptn Integer

You liked the article?

Like: 2

Vote for difficulty

Current difficulty (Avg): Medium

TekSlate is the best online training provider in delivering world-class IT skills to individuals and corporates from all parts of the globe. We are proven experts in accumulating every need of an IT skills upgrade aspirant and have delivered excellent services. We aim to bring you all the essentials to learn and master new technologies in the market with our articles, blogs, and videos. Build your career success with us, enhancing most in-demand skills in the market.